Artificial intelligence becomes fundamentally different the moment it gains the ability to act.

Generating text is safe.

Executing commands is not.

When AI systems can modify files, run workflows, automate browsers, or interact with operating systems, a new problem appears:

How do you prevent automation from causing unintended damage?

Many agent systems attempt to solve this with warnings or configuration settings. ProWorkBench approaches the problem differently — by making safety part of the execution model itself.

The Problem With Autonomous Execution

Most AI agent failures don’t come from intelligence mistakes.

They come from execution without checkpoints.

Common failure patterns include:

- commands running automatically without review

- incorrect assumptions leading to destructive actions

- browser automation operating outside intended scope

- scripts modifying the wrong files

- cascading actions triggered by a single bad decision

When execution happens silently, recovery becomes difficult or impossible.

The issue isn’t autonomy.

The issue is uncontrolled autonomy.

Tools: Turning Capability Into Structure

In ProWorkBench, actions are not free-form instructions.

They are structured as tools.

A tool represents a specific capability, such as:

- interacting with the file system

- performing browser automation

- executing commands

- running workflows

- querying services

By defining execution through tools, actions become:

- predictable

- inspectable

- governable

Instead of the AI improvising raw system access, it works within defined capabilities.

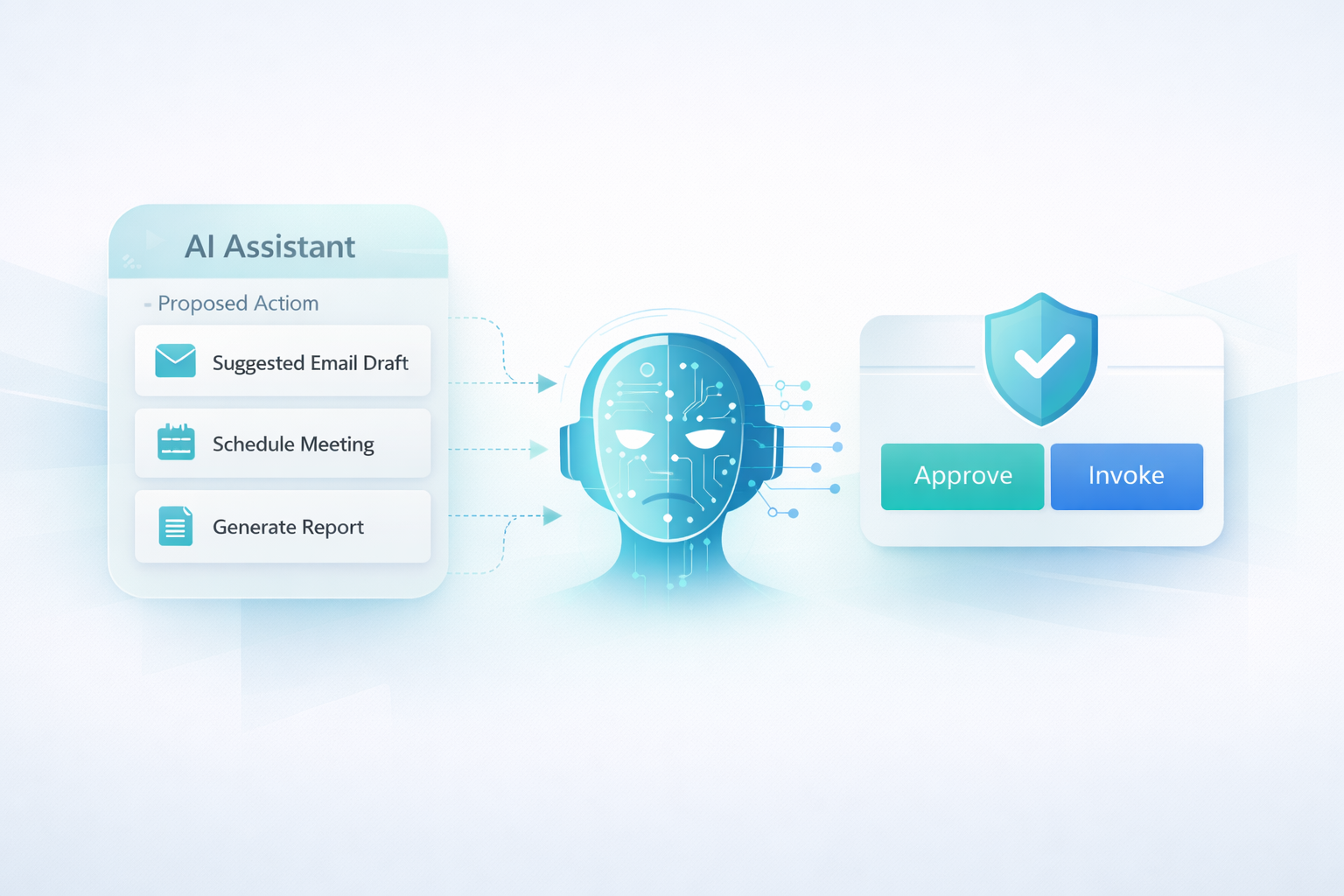

Proposals Before Execution

One of the core design principles in ProWorkBench is simple:

Nothing executes immediately.

When the assistant decides an action may help, it creates a proposal.

A proposal shows:

- what action is requested

- which tool will be used

- what parameters are involved

- what outcome is expected

This transforms automation from a hidden process into a visible decision.

You see what the system intends to do before anything happens.

Explicit Invoke: Human Control at the Right Moment

After reviewing a proposal, execution only happens when you explicitly choose to Invoke it.

This approval step is intentional.

It ensures:

- risky actions require awareness

- unexpected behavior is caught early

- automation remains collaborative instead of autonomous in secret

The goal isn’t friction.

The goal is preventing irreversible mistakes.

Policies: Guardrails That Scale

As systems grow more capable, manual oversight alone isn’t enough.

ProWorkBench introduces policies to define boundaries for tool usage.

Policies can determine:

- which tools are allowed

- when approval is required

- what environments actions can affect

- which operations are blocked entirely

This allows governance to scale alongside capability.

Automation becomes safer not by limiting power, but by structuring it.

Why Silent Automation Fails

Systems that execute automatically often fail in subtle ways:

- small mistakes compound quickly

- users lose visibility into actions

- debugging becomes difficult

- trust erodes

When users cannot see decision boundaries, they stop trusting the system.

Approvals-first execution restores transparency.

Governance Without Slowing Work

A common misconception is that approvals slow automation.

In practice, they do the opposite.

Because proposals are clear and structured:

- decisions are faster

- risks are visible immediately

- workflows become repeatable

- confidence increases

Users move faster because they understand what will happen next.

Connecting Governance and Local Execution

ProWorkBench combines approvals-first execution with a local-first architecture.

This means:

- execution happens within your environment

- policies remain under your control

- sensitive operations never require external trust

Together, tools, policies, and approvals create a governed execution model designed for real-world use.

If you’re new to governed autonomy, start here:

👉 What Is a Governed Autonomous AI System?

(link to Post #1)

And for the architectural perspective:

👉 Local-First AI Agents: Why Execution Should Stay on Your Machine

(link to Post #2)

Final Thought

Autonomous AI doesn’t fail because it is powerful.

It fails when power lacks structure.

By combining tools, policies, and explicit approvals, ProWorkBench turns autonomy into something practical — a system that can act, while still keeping humans firmly in control.

That balance is what makes automation usable at scale.

read more

What Is a Governed Autonomous AI System?

Local-First AI Agents: Why Execution Should Stay on Your Machine

How to Build a Plugin for ProWorkBench (Step-by-Step Guide)

Plugins in ProWorkBench: Building an Ecosystem Around Governed Autonomy